Welcome to Research Log #023 and happy new year! We document weekly research progress across the various initiatives in the Manifold Research Group, and highlight breakthroughs from the broader research community we think are interesting in the Pulse of AI!

We’re growing our core team and pursuing new projects. If you’re interested in working together, join the conversation on Discord and check out our Github.

NEKO

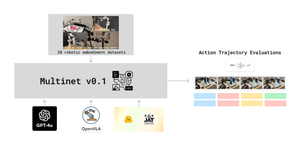

The NEKO Project aims to build the first large scale, Open Source "Generalist" Model, trained on numerous modalities including control and robotics tasks. You can learn more about it here.

- Datasets: We have a proposal for a Version 0 of the dataset for the Vision + Language modality! This proposal is important because we are going to train the final model with something aligned to this dataset. You can read it here, and jump into the conversation on our Discord.

We are currently in the process of planning for Q1. Stay tuned to read more of what we have planned for this year!

Agent Forge

The AgentForge Project aims to build models, tools, and frameworks that allow anyone to build much more powerful AI agents capable of using tools and interacting with the digital and physical worlds. For this week we are focusing on AutoGen: Enabling Next-Gen LLM Applications via Multi-Agent Conversation for the survey. We are also focusing on planning what is ahead for Q1, so keep your eyes peeled!

Pulse of AI

There have been some exciting advancements this week!

- TinyLlama: A compact 1.1B language model pre-trained on a corpus of approximately 1 trillion tokens across three epochs. Derived from Llama 2 architecture and tokenizer, TinyLlama incorporates advancements from the open-source community, such as FlashAttention, to optimize computational efficiency. Despite its scale, TinyLlama exhibits proficiency across diverse downstream tasks, surpassing existing open-source language models of comparable size. You can check their repo or their paper.

- Towards Conversation Diagnostic AI: Researchers from Google Deepmind introduced AMIE, a cutting-edge Large Language Model (LLM)-based AI system designed for diagnostic dialogues, showcasing superior performance over primary care physicians in a comprehensive study. While AMIE's diagnostic accuracy and proficiency are noteworthy, further research is needed before translating it into real-world settings, marking a significant milestone in the advancement of conversational diagnostic AI. Dive into the paper here.

- LLM in a flash: Researchers at Apple have created a new way to run Large Language Models (LLMs) on consumer devices that are not very powerful. The method used was "windowing" to reuse neurons and cut down data transfer and "row-column bundling" to make flash memory read larger data chunks more efficiently. The two methods made a 20x-25x increase in inference speed compared to naive approaches. If you are interested in reading more, read more in their paper.

If you want to see more of our updates as we work to explore and advance the field of Intelligent Systems, follow us on Twitter, Linkedin, and Mastodon!